Hey there! Thanks for being here! I am an associate professor in the computer science and engineering department at the University of South Carolina (USC). My research involves advancing the state-of-the-art in AI/ML by developing novel algorithms and methods for solving some outstanding problems in:

- Sustainable AI [funded by NSF]: We have several ongoing projects on sustainable AI, including ML Inference Pipeline Adaptation, to find the right tradeoff between High Accuracy, Cost-Efficiency, and Sustainability. We also develop design strategies and patterns that developers can use to build sustainability into their system as an explicit goal.

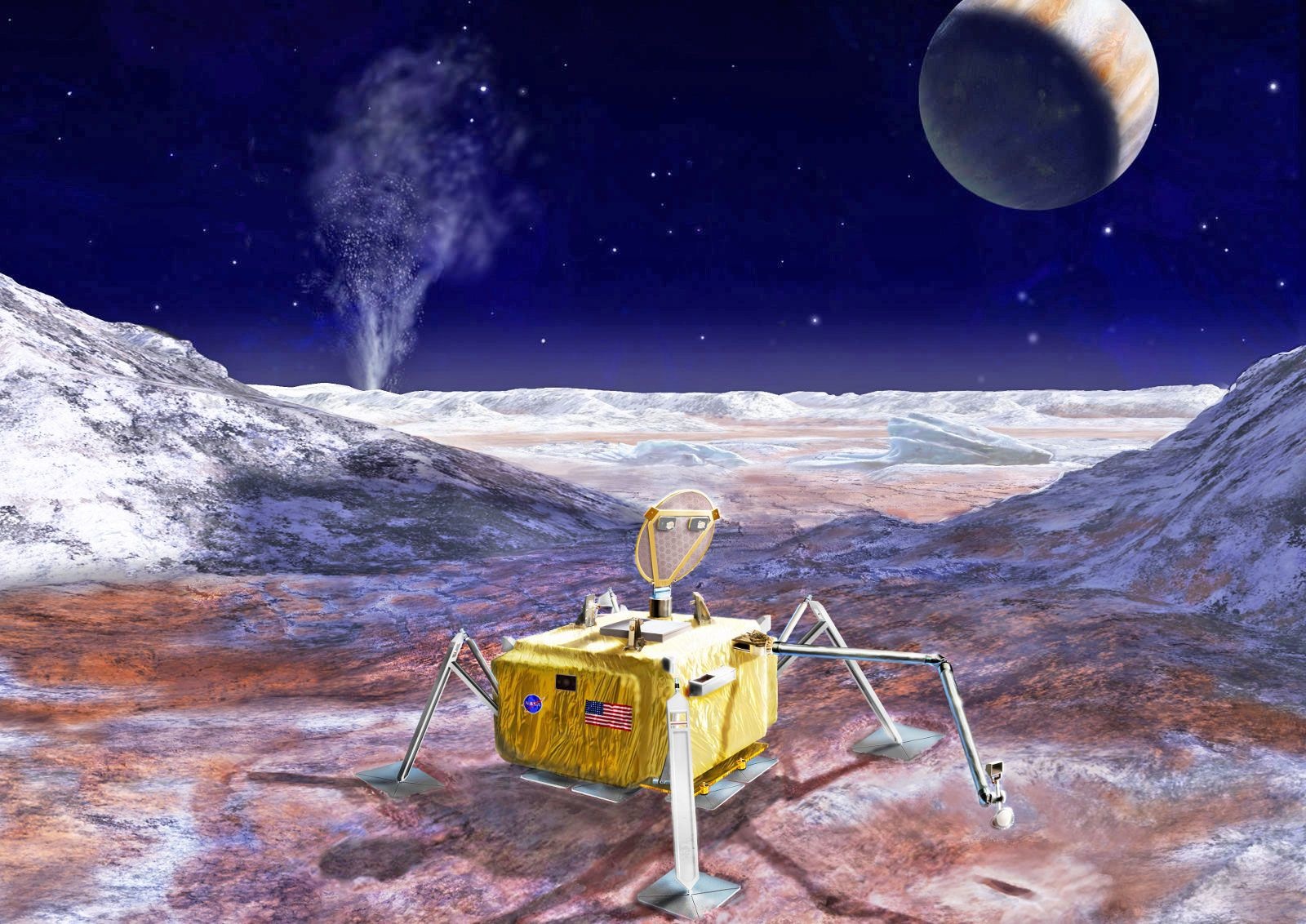

- Autonomous Systems and Robotics [funded by NASA]: e.g. (i) Our work on Autonomy for space lander missions to the Ocean Worlds, such as Europa and Enceladus. Our project, RASPBERRY-SI, in collaboration with CMU, UArk, the University of York, as well as testbed providers: NASA JPL (physical testbed, called OWLAT) and NASA Ames (virtual testbed, called OceanWATERS). (ii) our recent project on developing a framework for designing Modular Autonomy on ROS (MARS).

- Causal Reasoning [funded by NSF and Google]: e.g., (i) Our work on causal reasoning for performance debugging and optimizations in computer systems (Distributed, Hardware-Software, ML Pipelines), in collaboration with Christian Kaestner (CMU) and Baishakhi Ray (Columbia). (ii) As a former visiting researcher at Google, I worked on causal representation learning, enhancing the explainability of learned representation in deep neural networks via causal features for large-scale systems that rely on such representations for automated decision-making.

- Sciences and Engineering [looking for funding!]: e.g., (i) Our collaborative work on causal learning for cancer research in collaboration with Phillip Buckhaults (USC's College of Pharmacy) and optimal vaccine promotion for COVID-19 in collaboration with Gregory Trevors (USC's College of Education). (ii) Our collaborative work on deep learning for symbolic mathematics in collaboration with Kallol Roy (University of Estonia).

I am particularly interested in the theoretical foundations of Causal Representation Learning, Adversarial ML, AutoML, and Transfer Learning. In addition to theory, I am excited about Sustainable AI, ML for Systems, and Systems for ML.

Before joining the faculty at the University of South Carolina, I was a postdoc in the School of Computer Science at Carnegie Mellon University (USA), working with Christian Kaestner, and, before that, I was a postdoc in the Department of Computing at Imperial College London (UK). I received my Ph.D. in Computer Science at Dublin City University (Ireland), and my advisor was Claus Pahl. I received an M.S. degree (research-based) in Systems Engineering from Amirkabir University of Technology (Iran) in 2006, and my advisor was Saeed Mansour. I also received a B.S. in Math & Computer Science from the Amirkabir University of Technology (Iran) in 2003.

Important Links

-

Update [deadline: 2025/05/30]: I am hiring a postdoctoral researcher in Systems (ML for Systems and Systems for ML), Robotics, or Causal AI in my group at the University of South Carolina. There are excellent opportunities for interdisciplinary collaboration, including with colleagues in the Department of Mathematics working on data science, AI foundations, optimization, and graph theory. Applicants must have a Ph.D. (or have submitted their dissertation) by the start date and must be U.S. citizens, nationals, or permanent residents due to funding restrictions. If you are interested, please contact me.

-

As of January 2023,

I do not plan to hire new Ph.D. students until further noticeand I am so sorry that I may not be able to answer prospective student emails asking for available positions at AISys. You are always welcome to apply for a master’s or Ph.D. degree at the department. If you are selected for such a graduate program in our department, you will have the opportunity to apply for an assistantship (teaching or research) to help cover your stipend and tuition, either at the department (teaching) or within specific laboratories directed by our faculties (research). Depending on common research interests, you will also have the opportunity to reach out to our faculties to find the best match for mentoring. -

With Vijay Chidambaram, Romain Jacob, Neeraja J. Yadwadkar, and Ivo Jimenez, we co-founded JSys—a new

diamond open-access journal for the systems community. I also co-chair two areas at JSys: Computer Architecture (with Devashree Tripathy) and Configuration Management (with Tianyin Xu). Please consider submitting your research work to JSys! -

Want to

work with us? Read about AISys Lab. -

Want to

know about AISys and our collaborators? Here you can find an overview of AISys lab members and their research. -

Want to

know more about our research? Please check out our Core Research Areas and Publications. -

Want to join our weekly

reading group? Please read about our AISys Reading Group. -

Want to

join our student-led robotic team at USC? Read about Gamecock Robotics. -

Want to

know whether I am available for AI consultancy? Please check out our Consultancy Services.

News

| 11/17/25 | I am honored to be selected as one of the ACM Symposium on Cloud Computing 2025's distinguished reviewers. Thank you, SoCC'25! |

| 01/15/25 | I’m thrilled and humbled to share that I’ve been recommended for tenure and promotion to the rank of Associate Professor at the University of South Carolina! This achievement is not mine alone—it reflects the unwavering support, love, and encouragement I’ve received from my family, friends, mentors, colleagues, and students. My family’s strength and belief in me have been my foundation throughout this journey, and I couldn’t have reached this milestone without them. I’m deeply grateful for this opportunity to continue teaching, learning, and growing in my role. Here’s to the next chapter of pushing boundaries and exploring new possibilities together! |

| 05/07/24 | Saeid Ghafouri wrote a blog post about his amazing project at AISys, in particular, about how he has done extensive experiments using Chameleon cloud resources: Optimizing Production ML Inference for Accuracy and Cost Efficiency. |

| 04/15/24 | IPA: Inference Pipeline Adaptation to Achieve High Accuracy and Cost-Efficiency, has been accepted for publication in the Journal of Systems Research (JSys)! The IPA artifact was fully reproduced by the JSys artifact team. I am so proud of this work with amazing collaborators. Well done, Saeid! |

| 03/28/24 | I am honored to receive the SEAMS's Most Influential Paper Award for our paper entitiled "Pooyan Jamshidi, Aakash Ahmad, Claus Pahl: Autonomic Resource Provisioning for Cloud-based Software. SEAMS 2014" from my beloved community! This would have not been possible without having an amazing advisor such as Claus and an awesome collaborator such as Aakash! |

| 01/03/24 | I am delighted to serve as an Associate Editor of ACM Transactions on Software Engineering and Methodology (TOSEM) and as a PC member at European Conference of Artificial Intelligence (ECAI'24). Please consider submitting to these venues! |

| 12/13/23 | FlexiBO, will be presented at AAAI'24 in Vancouver, Canada! |

| 11/28/23 | I had the honor to present our recent work on InfAdapter and IPA in the Engineering Self-Adaptive Software Systems class at the University of Waterloo remotely. Thanks, Prof. Ladan Tahvildari! |

| 11/10/23 | Shahriar Iqbal has successfully defended his PhD on Performance Modeling, Debugging, and Optimization of Configurable Computer Systems: A Causal and Statistical Machine Learning Perspective. With 3 A* publications at EuroSys, SoCC, and JAIR, he is among a few exceptional Ph.D. graduates at UofSC. Not to mention that Shahriar is officially the first Ph.D. graduate out of my group, and I am so proud of his achievements. Well done, Shahriar! |

| 09/1/23 | CAMEO: A Causal Transfer Learning Approach for Performance Optimization of Configurable Computer Systems, has been accepted for publication in the ACM Symposium on Cloud Computing (SoCC)! Well done, Shahriar! |

| 04/30/23 | Rethinking Robust Contrastive Learning from the Adversarial Perspective has been accepted at New Frontiers in Adversarial Machine Learning at ICML'23 (AdvML-Frontiers'23). Well done, Fatemeh and Mehdi! |

| 04/30/23 | CaRE: Finding Root Causes of Configuration Issues in Highly-Configurable Robots has been accepted for publication in the IEEE Robotics and Automation Letters (RA-L). Well done, Abir and Sonam! I am thrilled to have the opportunity to be a part of the fantastic collaboration with Bradley Schmerl, Javier Cámara, David Garlan, Jason M. O’Kane, Ellen Czaplinski, and Katherine Dzurilla! |

| 04/28/23 | Our recent collaborative work, Reconciling High Accuracy, Cost-Efficiency, and Low Latency of Inference Serving Systems, has been accepted at EuroMLSys'23! InfAdapter code is also available on GitHub. |

| 03/15/23 | A new version of our paper, Pretrained Language Models are Symbolic Mathematics Solvers too!, with some new experimental results and theory, is on ArXiv! Thanks for the great work led by Kimia Noorbakhsh and Kallol Roy! |

| 03/11/23 | FlexiBO: a Decoupled Cost-Aware Multi-Objective Optimization Approach for Deep Neural Networks, has been accepted for publication in the Journal of Artificial Intelligence Research (JAIR)! Well done, Shahriar! |

| 01/20/23 | Shahriar Iqbal successfully defended his Ph.D. proposal, entitled Performance Modeling, Debugging, and Optimization of Highly Configurable Computer Systems: A Causal and Statistical Machine Learning Perspective. Well done, Shahriar ♡. |

| 01/19/23 | CaRE: Finding Root Causes of Configuration Issues in Highly-Configurable Robots is on ArXiv. Well done, Abir and Sonam! so proud of this work ♡. |

| 01/16/23 | Prof. Danny Weyns (Katholieke Universiteit Leuven) is visiting the AISys lab this week. |

| 08/30/22 | A new version of FlexiBO, a Decoupled Cost-Aware Multi-Objective Optimization Approach for Deep Neural Networks, with some new proofs and connections with multi-objective optimization theory, is on ArXiv! Well done, Shahriar! |

| 08/09/22 | I am thrilled that our collaborative effort with Eunsuk Kang (Carnegie Mellon University), Mehdi Mirakhorli, and Callie Babbitt (Rochester Institute of Technology) on Software-Driven Sustainability has been funded by NSF; Thank you, NSF, for funding research on Sustainability in Computing! ♡. |

| 08/01/22 | Three members of AISys lab at UofSC (Sonam Kharde, Abir Hossen, and I) will be at NASA JPL in Pasadena, CA from August 1st - August 21st. We are hosted in the Robotic Surface Mobility Group (Hari Nayar). We will be testing and evaluating the AI-based Autonomy, developed by the RASPBERRY-SI, with Ocean World Lander Autonomy Testbed. |

| 08/01/22 | Gamecock Robotics has now a website, stay tuned! |

| 06/01/22 | Thanks, Megan, for writing a piece about the UofSC breakthrough award. |

| 03/15/22 | Unicorn was awarded the Available, Functional, and Reproducible badges from EuroSys'22, thanks to dedicated work by Shahriar as well as excellent collaborators, Rahul Krishna, Mohammad Ali Javidian, and Baishakhi Ray. Since we benefited a lot by learning from previous rejections of this work, and therefore, to help other awesome researchers in our community, we release all reviews and rebuttal. |

| 01/30/22 | I am so delighted that Sonam Kharde has joined AISys as a postdoc; She will be working on Causal AI for Autonomous Systems. Welcome, Sonam! |

| 01/22/22 | UofSC's College of Engineering and Computing published an interview about our NSF project on Causal AI for Systems. |

| 01/10/22 | Unicorn has been accepted EuroSys'22; We are grateful to all who provided feedback on this work, including Christian Kaestner, Sven Apel, Yuriy Brun, Emery Berger, Tianyin Xu, Vivek Nair, Jianhai Su, Miguel Velez, Tobius Durschmied, and the anonymous Eurosys'21&22 reviewers. |

| 01/10/22 | I am honored and humbled to be among the recipients of UofSC's 2022 Breakthrough Stars Award. I owe this recognition to so many people, including brilliant graduate students and postdocs at AISys, my colleagues at UofSC, my collaborators around the globe, and my dear family without their support none of these were even imaginable. |

| 12/05/21 | Two papers were accepted at ICSE 2022 (On Debugging the Performance of Configurable Software Systems: Developer Needs and Tailored Tool Support) and NeurIPS WHY-21 (Scalable Causal Transfer Learning). Congrats, Miguel Velez, Om Pandey, and Mohammad Ali Javidian! |

| 10/27/21 | I am honored to become the academic mentor of Gamecock Robotics--A team consisting of more than a dozen students who compete in international robotics leagues, including VEX Robotics. |

| 08/20/21 | A postdoc position (up to 3 years) is available at AISys on Causal AI for Systems. Please apply here. |

| 08/09/21 | I am thrilled Causal Performance Debugging for Highly-Configurable Systems has been funded by NSF ♡. This is a collaborative project on Causal AI for Systems with Christian Kaestner (CMU) and Baishakhi Ray (Columbia) with total funding of $1,200,000. |

| 07/29/21 | We have released a demo about our NASA RASPBERRY-SI project on AI-based autonomy for Europa Lander to find life in Jupiter's moon Europa; thanks, NASA ♡. |

| 06/22/21 | I am thrilled RTG: Mathematical Foundation of Data Science at University of South Carolina has been funded by NSF ♡. This is a collaborative training project with my genius colleagues in mathematics, Wolfgang Dahmen, Linyuan Lu (PI) Wuchen Li, and Qi Wang, on the Mathematical Foundation of AI and ML. |

| 06/18/21 | A new way to ‘see’: A story about our NSF SmartSight project on AI for Social Good, has been published at UofSC's research magazine |

| 06/04/21 | I am so delighted that I (together with Valerie Issarny) will serve as the PC co-chair of SEAMS 2023 colocated with ICSE 2023 in Melbourne. Meanwhile, please do consider submitting to SEAMS 2022! |

| 05/10/21 | I am so delighted to announce that I am now a Visiting Researcher at Google! I will be working on Causal Representation Learning, Adversarial ML, and Self-Supervised Learning. |

Current Projects

AdaptiveFlow (2022–)

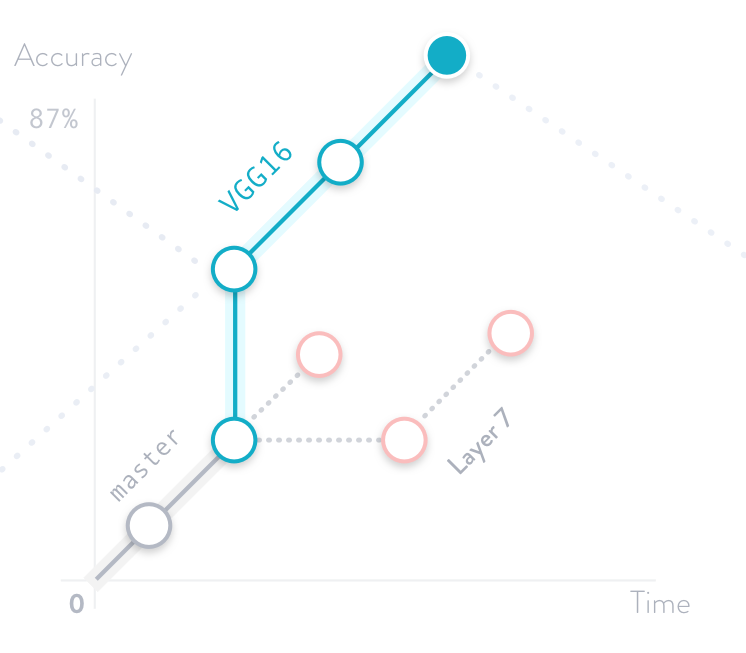

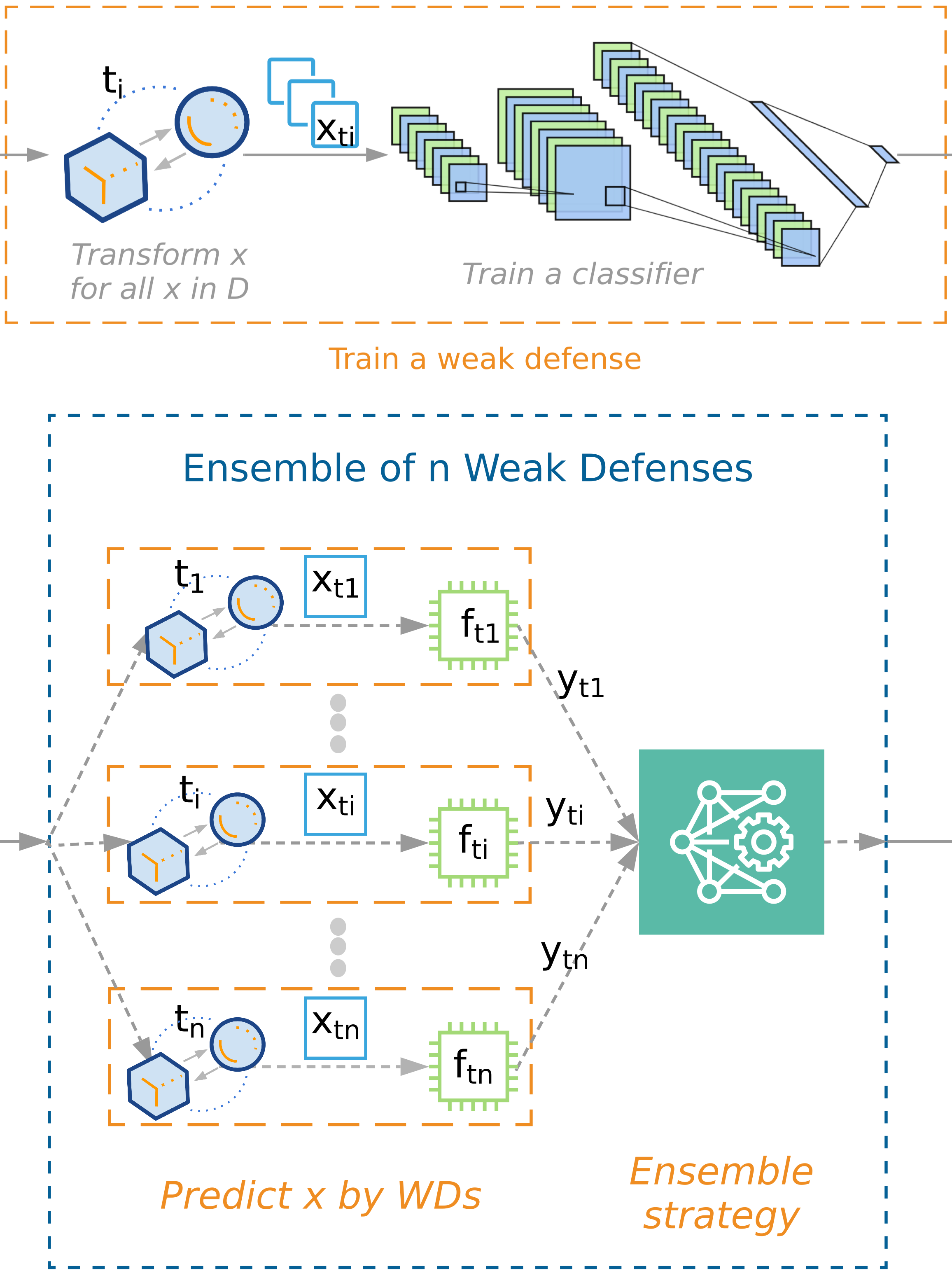

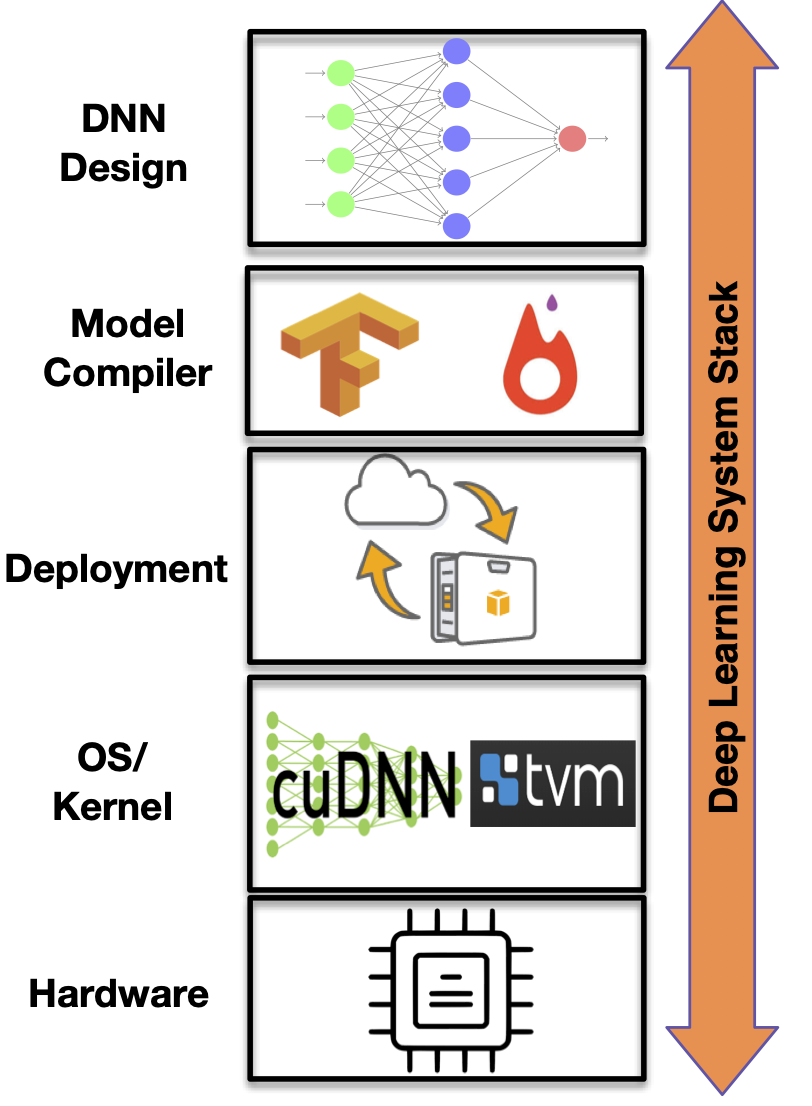

In this collaborative project, we develop infrastructure, runtime methods, and tools to enable performance optimization of ML inference pipelines by runtime dataflow restructuring, resource adaptations, model switching, and configuration tuning of model server knobs, such as batch sizes. This project is led by the University of South Carolina (US) and in collaborations with Queens Marry (UK), Technical University of Darmstadt (Germany), Vrije Universiteit Amsterdam (Netherlands), and industrial partners, including Roblox (US). It is one of the projects in my group related to Design for Sustainability in Computing.

AI for Symbolic Math (2021–)

Mathematics is one of the most precious human heritage. To preserve mathematical knowledge even beyond the human era, in collaborations with the University of Tartu, we develop new methods to solve symbolic math tasks (differentiation, integration, PDE, linear algebra, etc.) with machine learning models such as neural networks. The key ideas behind our approach are to exploit the underlying structures (e.g., tree-like structures) behind symbolic math tasks and transform the symbolic math tasks into tasks such as language translations that deep learning models can do well.

NASA RASPBERRY-SI (2020–)

In this collaborative project (USC, CMU, York, UArk, NASA JPL/Ames/GRC), we develop technologies that enable learning-based autonomous planning and adaptation of space landers.

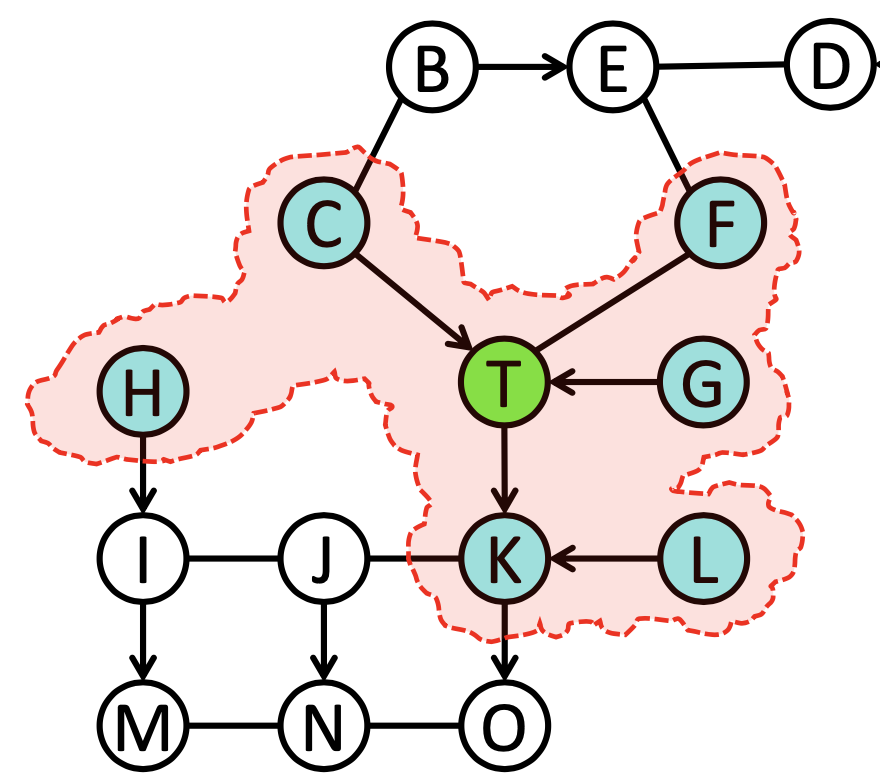

Causal AI: Structure Learning and Transfer Learning (2018–)

In this project, we are addressing the following important questions from theoretical and empirical perspectives: (i) How to learn reliable causal structures from data and how to use the learned causal structures for identifying causal invariances across environments? (ii) How to use the causal structure learning and counterfactual reasoning based on causal invariances for systems problems? In particular, we are extremely interested to apply these theoretical advancements for an explainable and guided performance debugging of highly-configurable systems.

ATHENA (2019–)

Despite achieving state-of-the-art performance across many domains, machine learning systems are highly vulnerable to subtle adversarial perturbations. In this project, we propose Athena, an extensible framework for building effective defenses to adversarial attacks against machine learning systems.

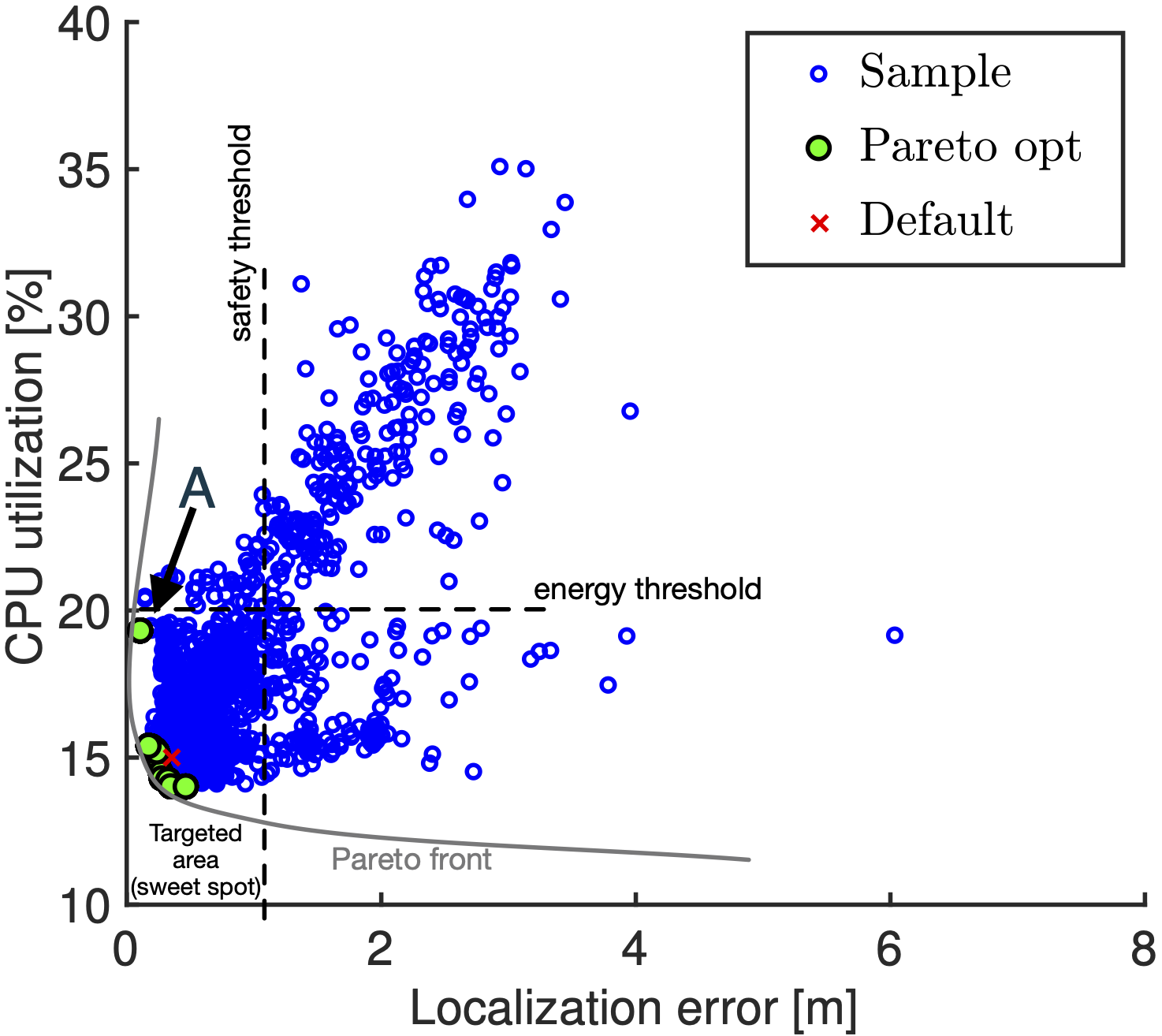

FlexiBO (2018–)

One of the key challenges in designing machine learning systems is to determine the right balance amongst several objectives, which also oftentimes are incommensurable and conflicting. For example, when designing deep neural networks (DNNs), one often has to trade-off between multiple objectives, such as accuracy, energy consumption, and inference time. We developed FlexiBO, a flexible Bayesian optimization method, to address this issue.

BRASS MARS (2016–)

How do you build a software system that can function for a century without being touched by a human engineer? This is the herculean task being undertaken by the DARPA Building Resource Adaptive Software System’s (BRASS) program. We, at UofSC with several collborators at CMU, have been developing learning mechanisms to be integrated with quantitative planning to adapt/reconfigure robots to overcome environmental changes at runtime.